Imagine you come up with an app that eliminates the need for haircuts. It’s amazing, it’s innovative, it’s game-changing.

You want to milk this opportunity for everything it’s worth, but you’ve got some questions on how to do that:

Pricing. Since just about everybody can use it, will you make more money charging $0.99 for it, or since it’ll save a few people a lot of money, should you charge $99?

Target Segment. Is it best to target Supercuts customers who clearly hate getting haircuts so much they’re willing to walk around looking like shit? Or should you target vain power brokers who would love to look perfect all the time?

Marketing. What’s the best marketing message:

Look your hairy best!

or

No more hair salon small talk. You’re welcome.

Before Crowdtesting

If you wanted to know which combination of the above generated the most revenue, you had one choice:

- Build the product, and then a/b test it.

Sure, you could a/b test landing pages before you built the product to see which combination got you more email addresses, but you’re not going to be able to pay employees in email addresses. And what if the landing page skews your results (e.g. what if Supercuts customers give out their email addresses more easily than CEOs)?

At the end of the day, we want to make our business decisions not counting visitors, Facebook likes, or email signups. We want to base them on $. Crowdtesting helps us do that.

What is Crowdtesting?

Crowdtesting is the combination of crowdfunding and a/b testing to validate business model hypotheses. But that’s a boring definition. This is better:

Crowdtesting will tell you how much $ your idea is worth, before you build it.

Here’s the video version from the 2012 Lean Startup Conference:

Crowdtesting in action

Some screenshots from the “crowdtesting” experiment currently running for Bounce.

Crowdtesting Step-by-Step

Step #1 – Come up with an idea for a product

While the principles apply to any opportunity, this particular technique is probably best suited for B2C opportunities that want to make money. If you’re doing an ad supported, or “why does everyone keep asking us how we’ll make money?” play, I’m sorry, I don’t have answers for you.

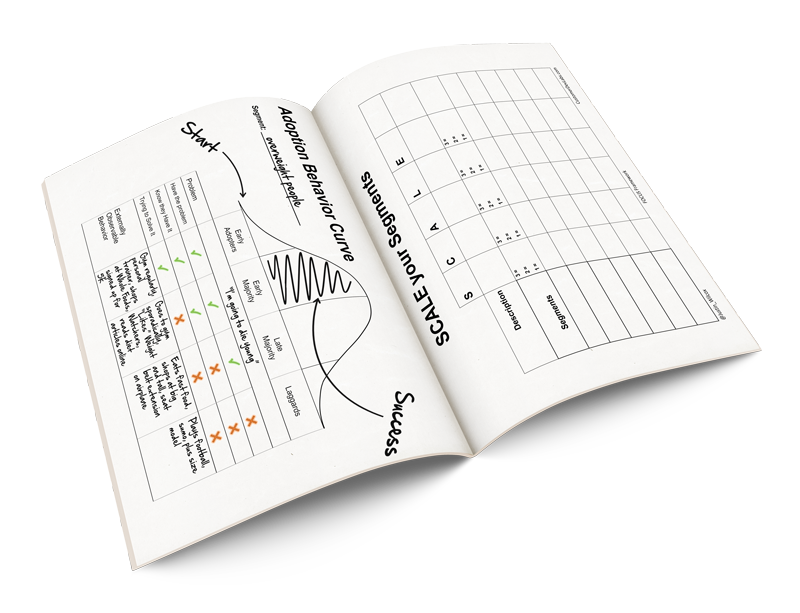

Step #2 – Figure out what you want to test

In my case, I wanted to know which of these would make more money:

- Selling Bounce directly to people who run late

- Offering Bounce as a gift people could give to others who run late

and then I wanted to know the optimal price point for the winner of the above.

Step #3 – Fork Selfstarter

The Lockitron folks were kind enough to open source the crowdfunding platform they built – Selfstarter. That makes it easy for us to launch our own crowdfunding project, and incorporate a/b testing.

Step #4 – Setup Amazon Payments

It can take a couple days to get your Amazon Payments account approved, which you’ll use to accept people’s credit card info, so be sure to start this process well in advance. Instructions are in the Selfstarter FAQ.

Note: I got a “sorry you don’t meet the requirements of a business account”-type email when I first signed up but when I told them what I was doing, I eventually got approval.

Step #5 – Setup A/B Testing

The Experiments functionality within Google Analytics is terrific for this kind of project. I actually tried to use them but I ran into trouble running two Selfstarters at once. I’m sure it’s a solvable problem, I just didn’t have time to resolve it.

Instead I simply ran one variation at the time (e.g. $10 instead of $5), and added a “variation” column to my orders table so I could count the number of pre-orders for each variation. Since the vast majority of my traffic was driven by an email list I segmented (more below), I was able to control exactly how many people saw each variation, and could compare apples-to-apples.

Step #6 – Launch

Yeah, baby!

Step #7 – Send traffic

Now you need to send traffic to your campaign. The great part about this step is that it’s fantastic practice to see how easy it’ll be to generate interest if you actually build the product.

As for how much you’ll need, I’m no statistician, but my thinking is, “just enough to run your experiment.” So far my experiments have been fairly conclusive, so I’ve gotten by with a couple thousand visitors for each variation.

As far as how I generated my traffic. I had posted a landing page collecting email addresses for people interested in Bounce for iPhone, before. The majority of my traffic came from Hacker News, press, and emails to folks who signed up on Bounce’s landing page.

Step #8 – Analyze the results

Once the orders come in, the fun starts!

The metric I found most useful was $/visitor. For example:

- With a $5 price tag, 1.4% of visitors pre-order Bounce. That’s $.07/visitor.

- With a $10 price tag, 1.7% of visitors pre-order Bounce. That’s $.17/visitor.

Sample size: 11,108

The combination of messaging and price points that generates the biggest $/visitor is the combination you want.

Important Notes:

There are a few non-obvious things you want to keep in mind when launching a crowdtesting project:

Crowdtesting isn’t perfect. I’m clearly a fan, but crowdtesting is by no means perfect. It’s probably best suited for B2C plays that can charge money. We also don’t have data yet on how accurately it predicts sales once the product launches. You need to be very aware of where your traffic comes from, because that can skew your results as well. Some mitigations for these issues are below. Overall, crowdtesting is no silver bullet, but it’s clearly better than email sign-ups on a landing page or building a product no one wants.

Your goal ISN’T to raise lots of money. Avoid trying to bring in tons of money during this campaign, that’s not the goal. The goal is to test a hypothesis in a scenario that resembles market conditions when your product actually launches. If you make getting lots of money a priority, you’re likely to try tactics that won’t be applicable when your product launches. In fact, I eliminated the total $ raised from my crowdtest altogether – Bounce’s published “goal” was in terms of backers (5,000).

Don’t sell your story. A lot of consumers support crowdfunding projects not because they want the rewards, but because they want to support the person raising the money. You don’t want that. You want people pre-ordering your product because they want it so badly, they’re willing to put down money early, to ensure it gets made. Avoid talking much about yourself during the campaign and instead focus on the value prop – that’s what you’re trying to test.

Do no harm. Crowdtesting only works because consumers trust the crowdfunding process. If crowdtesters start charging credit cards before they build their products, don’t deliver on the promises they make, or otherwise mislead consumers, our customers will lose faith in the process and the tool will lose its value.

Set your goal high. Remember, your primary objective is to test a hypothesis. If you set a goal that’s low and you hit it, you’re obligated to build the product (see “Do no harm”), even if it turns out your $’s/visitor is much less than you need for this venture to be profitable. Instead, set your goal to a high, but not outrageous level so that if you happen to hit it, you’d be happy to build the product.

Prime the pump. You know how baristas never put an empty tip jar out? It’s because people feel weird being the first to do something (e.g. first person to tip, first person to back a crowdfunding project, etc.). Since we want to mimic real-world market conditions as quickly as possible, we don’t want the first visitors to our site to feel like “oh, well no one else has supported this, so it must be dumb.” To avoid that, I started my backer count at 114 (with a goal of trying to reach 5,000). It wasn’t so high that I felt consumers would feel mislead about the popularity of the product, but it also wasn’t an empty tip jar.

Don’t charge credit cards until the product launches. Kickstarter lets you have customer money as soon as you reach your goal, but unless you have to have the money to pay for upfront costs, I’m a much bigger fan of not charging customers until you’ve actually launched the product. This will build more trust with your customers, it gives you an incentive to deliver, and if you happen to miss a deadline, at least you earn interest on your customer’s cash.

Only the beginning

This was a first crack at crowdtesting. As we learn more we’ll continue to post information. If you’re interested, you can subscribe for updates via Email or RSS.

Please, if you have any questions or comments send them our way. Also, if you run your own crowdtest, we’d love to hear about it (and have you post about it)!

In many cases, depending on the product, the customer wants their widget yesterday, so agreeing to buy it if you hit a certain goal doesn’t work. How do you feel about selling it as if it is available, and when they go to checkout, just before they enter their payment info telling them “This product is not in stock, we’d like to give you coupon to get it at a reduced price some time in the future, sorry for the inconvenience”. Is it dishonest, since we know that upfront but are not telling the customer, or is it along the same lines of honesty of not telling them there is a certain competitor with a better cheaper solution? I’d say the later, that it isn’t any more dishonest than giving a sales pitch without telling all the known drawbacks (and every product has drawbacks). Hey, we’re not all Jesus. Curious on your thoughts.

Great question. You can go either way, both of which have their pluses and minuses.

Personally, I prefer to be honest with my customers so I default to saying it’s a pre-order but I don’t go over the top about it. In other words, it’s not the first thing they see, but I try to make it clear during the checkout process that it’s a pre-order.

Of course you’re right, the downside to this approach is that fewer people will pre-order a product to be delivered in the future than will order a product available today. To take that into account, I usually assume my pre-order rate will be some percentage lower than my sales rate when the product is available. That means I might say, if I can reach 50% of my sales goal with pre-orders, assuming my sales will double when the product launches, that I will be able to achieve my sales goal once I launch.

Hopefully that makes sense.

The “we encountered an error” route can work too, but it starts a pattern of disappointing your users, which may not be the best for your long-term relationship with them.

Yeah, that makes total sense. If you’re doing an A/B test for example … so long as the conditions are the same (other than the tested criteria), it’s okay if you loose customers due to the conditions of the test. I had not thought of it that way. Thanks!

Hey Justin, Again- this was an amazing, game changing post for me. Thanks so much. Since your insights are really cutting edge, i have a question that is not so cutting edge;)

You wrote, under #7 Send Traffic, the following;

“As far as how I generated my traffic. I had posted a landing page collecting email addresses for people interested in Bounce for iPhone, before. The majority of my traffic came from Hacker News, press, and emails to folks who signed up on Bounce’s landing page.”

For me this has been the hardest part…i only wish i could get a 1000 visitors to one of my landing pages! I’ve read some of the other lean sites about sending traffic but am still looking for some advice with more teeth. Kinda step by step like the other great articles you’ve written.

So i guess my questions are;

1) Did you ever write a blog that addressed driving qualified traffic to a landing page before in that step by step style?

2) If not, could you recommend a couple specific blogs that i should burn the midnight oil reading?

This has been the only part of the lean process that i’m still not comfortable with, and i think, i’m probably representing a fair share of people.

Anyway- ANY advice, recommendations, referrals, etc, would be very much appreciated.

Thanks all

This is awesome, but can we take it a step further?

I wish there was a platform where you could submit your RAW ideas (just a paragraph and maybe a short video, nothing fancy), and quickly & effortlessly gather some data on whether it’s a good idea to even do research on. No payments or the like… just an effective barometer for ideas.

Any… ideas?

In this scenario it’d be really important to ask yourself, “What am I trying to learn?” If you’re not collecting money (to test whether people will pay), but instead collecting “data on whether it’s a good idea to even do research on”, you’re probably trying to answer the question, “Is this a problem my customer segment has?” or “Will this solution solve the problem my customers have?”

If you’re trying to answer the former, 1-on-1 customer interviews are my favorite type of experiments and paper/powerpoint prototypes are my favorite for the latter.

That said, the most crucial point when designing an experiment is to be very explicit about what you want to learn. Posting an idea on a website and getting feedback from random potential customers on it isn’t going to give you valid, actionable feedback on whether it’s worth pursing. It’s going to give you a pile of opinions, which probably aren’t worth the time it would take to get them.

Thanks for the question! Let me know if I haven’t answered it or you have other thoughts.

Cheers.

Fantastic tool. Will definitely recommend at our workshops.

Great Post! I’m going to do something similar to test my product and wanted to know how did you actually change the backer count in to code?

What happens when the users who paid $10 find out that other people got the exact same deal for $5? I understand that this I going to happend due to the nature of A/B testing, but I’m just curious what your thoughts are.

It’s a fantastic question, one I haven’t nailed down an answer to yet. In fact, I’ve received a couple emails from people who have already noticed price discrepancies and I’ve been wary to reply. Here are the available options I see, feedback requested:

Some of these options I like more than others, but I’d love to hear what ya’ll think.

Thanks for the great question Scott.

I’m interested in the pricing question, not least as I’m testing for a service that has a subscription fee.

Take no money feels like the best option as it seems fairest and honest.

In my case, I’d offer one month free (for the inconvenience) and invite them to join for the final price.

Obviously you don’t have this flexibility for a one time product.

In your position, I’d consider giving it away for free to pay for the inconvenience/minor annoyance of participating in some undeclared market research.

Whichever approach, my goal would be to leave the customers in a position where there feel they’ve been dealt a fair hand and not been deceived.

I’ve explored the pricing question for years, though not in a crowdtesting environment. I prefer value-based pricing for my marketing services recognizing that a customer lead is worth more to one of clients in one industry than it is to another client in a different industry. This isn’t practical in your example but I think you arrive at a similar conclusion if you clearly post your prices. Your item was worth $10 to someone who purchased at that price. That value should not diminish simply because he learned that someone else paid $5.

Great post Justin, perfect timing, I think I will put your instructions to the test! :)

Thanks so much! _Please_ let me know how it goes (and how we can improve). Would also love to share your story when its ready.

Wei, question of clarification – is there anything repetitive about the class? That is, is the same session offered once a month? Or is the session totally unique each time, as in different speakers come and talk about completely unrelated topics? Clearly getting feedback on a class after it has been offered is much slower-paced than an online product with hundreds or even thousands of customers, but I can certainly imagine ways to test it. For example, if you’ve built up your audience of people who want to attend and you have fewer seats than participants, why not try an secret auction of sorts? Tell them you’ll charge them the minimum bid for a seat (within a reasonable price range) and that anyone under the selected minimum will lose the auction and won’t be able to participate. This will give them incentive to bid the price they’re actually wiling to pay. You could also try offering two very slightly different versions of the same class, but with different prices. Or the same class but one with an additional value like a special office hours session. Split the two offerings among your participants and see what the reaction is. If they learn about the different offers, you can justify the difference in price with the value-add. After testing you can notify people of the more popular option so they can switch or just cancel the one that is unpopular. Lot’s of ideas here, but I’m writing too much. I’ll leave it to Justin to share his wisdom (and refute my suggestions if he disagrees).

Hakon, you’re a better customer dev labs blogger than I am. Thanks so much for your help. Adam of Lean Startup Machine and I were just chatting about dynamic event pricing. If you want to test an idea together, let’s talk ;)

I’m just a fan that talks too much. ;) Appreciate the complement.

Running a test on dynamic event pricing would be really interesting. Seems like a perennial problem that could use some love. Contact me any time.

Justin,

I enjoyed your session at Monday’s Lean Startup conference. Similar question as Wei’s – how might crowdtesting work for service offerings, particulrly those that need to be delivered in person vs online?

David

Thanks for the question David! I just left a detailed reply to Wei’s comment, so take a look there and let me know what you think.

Re offering services vs a product, the answer depends on what you’re trying to learn. If you’re trying to learn what price you should offer your service at, I think we can just use the same model. Let’s pretend you’re a marketing consultant and you want to know if you should offer 1-hour drop-in consulting rates for local startups. Throw up a crowdtesting page describing that service, a/b test it with a couple price points, and then say, “If I get 25 sign-ups within a month, they’ll happen. If not, they won’t.”

If on the other hand you’re simply trying to figure out if there’s demand for this service, you probably don’t need crowdtesting at all. You just need some traffic and a way to accept orders. If you drive some traffic to your site and it meets your threshold for being a viable offering, awesome, do it. If however you don’t get enough conversions, time to go back to the drawing board and figure out what customers really need.

Please hit me back if I haven’t fully answered your question. Want to get this right.

Hi Justin,

I read the post, found it super informative and am wondering how to do it for myself.

Question I want to answer is ‘How much will a user pay for each SESSION of a class?’

My impression now is that crowdtesting is for a product and not appropriate for ‘per session classes’.

Any thoughts or pointers there?

Thanks!

Wei

Thanks for the question Wei! Maybe it’ll help to break down crowdtesting to it’s most basic level:

It’s an a/b tested order form, with a progress bar.

All we’re really doing is taking a normal order/credit card processing page and slapping a progress bar on top of it that has the socially accepted meaning, “If the progress bar doesn’t reach 100%, customers won’t be charged for this. If it does, customers will get what they ordered.”

With that in mind, it feels like anything with a price tag that has enough interest to be support an a/b test, could be crowdtested.

I’m imagining you describing your session on a page, perhaps with a video and then making two version of the page. One at $X and one at $Y and measuring which one bring is more revenue. As long as you can drive enough traffic so that you feel comfortable with the results, you should be able to price the session appropriately.

This is a really important question, so please let me know if I haven’t fully answered it.

OK I have a similiar question… if our product is an app store membership priced on a monthly basis is the app store ( proposed) how do we test the pricing as you describe? Do we take the 1.99$ hypothesis and multiply by 12 for a 24$ proposed price on for a YEAR membership to our platform? If so we’ll have to go on multiples of that for pricing testing, like 12$ 24$ 36$ and maybe a higher one? 48$ or even 72$ for the year? can we test 5 price points at once within a split campaign? or is that silly? Justin, you are so awesome that I just cant contain myself sometimes. thanks again for your incredible entrepeneurial mentorship!